01 / The problemRec sports fails at the one moment that determines retention: the transition from "I signed up" to "I belong here."

Recreational sports platforms have optimized for logistics — scheduling, payments, performance tracking — while abandoning users at the moment that actually decides whether they stay. Meanwhile, costs have turned participation into a luxury: adult leagues routinely run $700–$1,060 per season in major metros, and average family spending on a child's primary sport rose 46% between 2019 and 2024.

I mapped the 16 jobs a rec-sports participant needs done, across three tiers: Access & Readiness, Social Integration, and Identity & Belonging. Then I rated 13 competitor platforms against all 16.

A 2023 review of 29 adult sports-participation studies found that the mental-health benefits of rec sports flow through two pathways — physical activity and social relationships — with "belonging" explicitly identified as a mechanism. One included study found no relationship at all between activity volume and wellbeing outcomes. Platforms optimizing for access and scheduling while ignoring social integration may be optimizing the wrong pathway entirely.

02 / The insightEvery activity has two fits. Every incumbent only measures one.

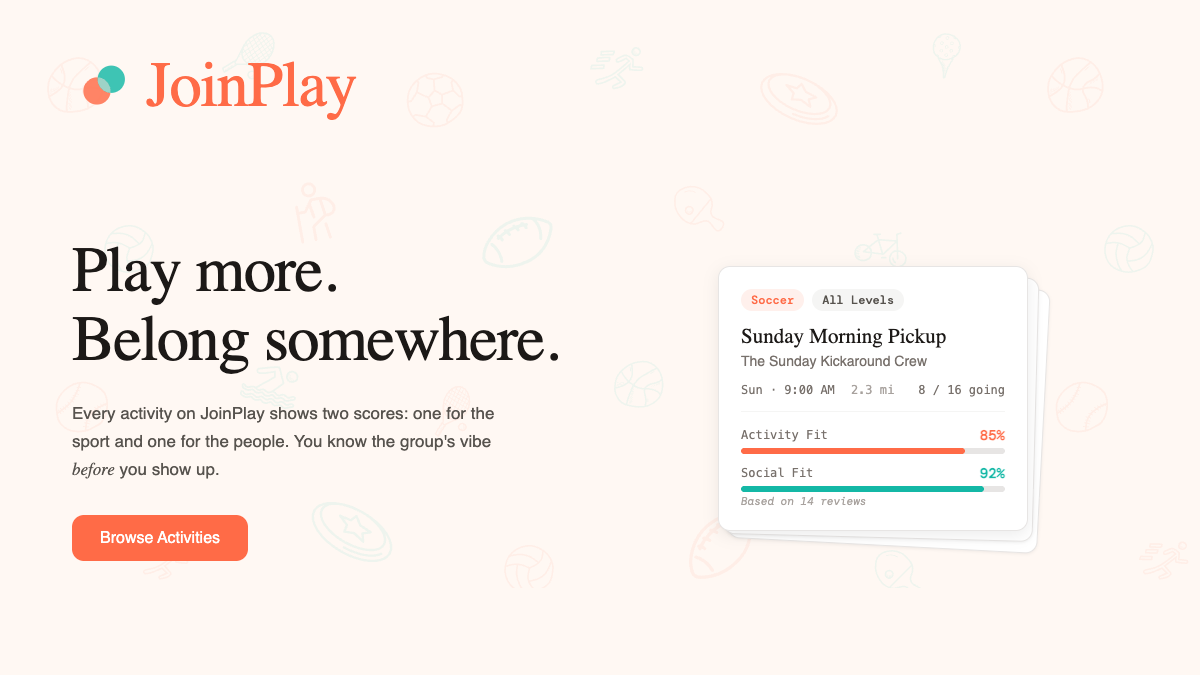

The reframe was separating Activity Fit (skill match, location, schedule) from Social Fit (welcoming, competitive intensity, social continuity). Both matter. Either alone produces bad matches and silent churn.

That became the core product hypothesis: if both fits can be quantified and made visible before a user commits, the social-integration tax on newcomers collapses.

- Activity Fit — two required inputs per sport at onboarding (skill tier, availability), plus two optional signals (years experience, frequency). Self-reports cross-validate against post-session feedback over time, correcting for the well-documented tendency to overestimate skill at sign-up.

- Social Fit — scores a group on three dimensions: newcomer welcoming, competitive intensity, and social continuity. Weighting starts from JTBD priority ranking; once feedback data accumulates, weights shift to whichever dimension most predicts whether newcomers return.

- Two scores visible before RSVP — the fit scores appear on every activity card, before the user commits. Every group knows it's being scored, which changes incentive dynamics at the supply side.

A user can hit 94 on Activity Fit and 66 on Social Fit for the same event — near-perfect skill/location/schedule match, but a competitive-intensity gap that would make them quietly never come back. That's the exact mismatch the category has been invisibly producing for years.

03 / Why now, and where to enterMid-size U.S. cities are the beachhead.

242M Americans participated in sports or fitness in 2023 — ~80% of the population aged six and older. Participation intent has grown 118% since 2023, and one in five Americans now plays in a rec league or plans to (47% among Gen Z). The market is real and growing.

Major metros already have dense league infrastructure. Mid-size cities — the 441 U.S. cities between 75K and 500K residents, covering 64M Americans across 45 states — don't. Discovery is harder, fit is impossible to assess, and no incumbent has meaningfully served them. The need is more acute, not less. It's also a strategic advantage: in markets where even logistics is underserved, JoinPlay can communicate value before every social-integration feature is live.

Within that beachhead, three segments by social motivation:

- Primarily Social players — use sports as a vehicle for friendship. High LTV, high word-of-mouth. No incumbent serves them because every major competitor is built around the activity, not the relationship.

- Balanced Social-Activity players — want both dimensions optimized. The largest addressable segment and the highest-complexity problem. Primary target.

- Activity-Focused players — social benefits are secondary. Existing fitness apps serve them adequately. Secondary market.

04 / The systemThree objects, two mirrored profiles, one fit score per dimension.

JoinPlay runs on three primary objects — users, groups, and activities. Users build a profile rating themselves on skill, availability, and social preferences. Groups do the same across mirrored dimensions. Groups post activities; every activity card surfaces Activity Fit and Social Fit scores derived from comparing the user profile against the group profile. Post-session feedback refines both the group's validated scores and the user's own preference profile. The next match is more accurate than the last.

Three product principles separate it from the category:

- Scores before commitment. Activity Fit and Social Fit visible on every card. No hidden ranking.

- Scoring groups, not individuals. People fear being judged. Groups don't. Users reflect on their own experience, not on each other. This was a deliberate pivot away from peer-rating models that bleed trust.

- Unvalidated scores are labeled. New groups show a self-reported culture profile clearly distinguished from a validated score. Validation requires a minimum feedback threshold.

Accessible entry. Creating a group, joining an activity, and attending sessions costs nothing. After three attended activities, continued access to the intelligence layer requires a $5–8/mo subscription (pending WTP work). The paywall is tied to demonstrated value, not a calendar — three sessions is enough to experience the fit scoring working.

05 / What I'd measure instead of vanity

Since there are no real users yet, I won't pretend. The metrics worth watching post-launch are the ones tied to the social-integration thesis — not signups or DAU.

These are diagnostic, not vanity. If Belonging Confidence holds at 4.0+ while Newcomer Absorption stays above 50%, the core hypothesis is working. If signups grow but those two drift down, we're scaling a broken experience — which is the specific failure mode I'm designing against.

06 / Where it standsPre-launch, tightening core loops before I start marketing.

The MVP is live at joinplay.io, with the social-integration feedback loop in active build. Public launch follows the community-embedded GTM plan: local rec-center partnerships, problem-based search capture ("pickup basketball near me"), and word-of-mouth amplification before any paid acquisition. Quality over volume: healthy density in one city beats fragmented scale across five.

07 / What I'd take to the next role

- A working demonstration that a single PM, plus an agentic stack, can execute 0→1 scope that used to require a pod — discovery, PRD, architecture, build, and launch.

- Operational fluency with the messy parts of 0→1: pricing, legal, payments, ops, analytics instrumentation, safety & moderation design.

- Hard-won conviction that transparency in ranking systems is a product strategy, not a compliance feature — and that measuring the right thing (belonging, not RSVPs) is the single highest-leverage PM decision in a community product.